This is another blog post about running totals and again I will use CLR and demonstrate the power of CLR in such situations. Again this post is inspired by an article on SQL Server Central – Calculate the Running Total for the last five Transactions by Divya Agrawal.

This is a kind of running totals when we want to sum only a specific number of last rows.

The CLR function will be nothing than a slightly modification of the running totals function from my previous blog post: SQL Sever and fastest running totals using CLR.

I’ve updated this blog post based on the update of mentioned previous post, after communication with Paul White and added the security check into the CLR function to detect processing of rows out of expected order. Please read the previous post for more details.

Test data preparation

I will use sample data from the article, so it is possible to compare the solutions and we will be able to see the difference.

CREATE TABLE Accounts

(

ID int IDENTITY(1,1),

TransactionDate datetime,

Balance float

)

GO

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/1/2000',100)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/2/2000',101)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/3/2000',102)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/4/2000',103)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/5/2000',104)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/6/2000',105)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/7/2000',106)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/8/2000',107)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/9/2000',108)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/10/2000',109)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/11/2000',200)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/12/2000',201)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/13/2000',202)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/14/2000',203)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/15/2000',204)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/16/2000',205)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/17/2000',206)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/18/2000',207)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/19/2000',208)

INSERT INTO Accounts(TransactionDate,Balance) VALUES ('1/20/2000',209)

GO

CLR Solution

As I mentioned above the CLR solution is only a modification of function from previous blog post. We only add a queue of last calculated running totals.

The image above displays situation when we want to make running total for last 3 rows. Our solution will calculate the running total continuously as it is done in column B, but when the requested count of 3 rows is met, it will subtract the running total 3 rows back as can be seen on the image in column B. For this we need in our function a queue of the last X running total values (In this example 3). This class also implements the security check introduced in my previously updated article related to Running Totals. The security check consist in providing the correct row numbers in the sequence in which the records should be processed and in case the records are processed out of expected order, an exception is fired.

using System;

using Microsoft.SqlServer.Server;

using System.Runtime.Remoting.Messaging;

using System.Data.SqlTypes;

using System.Collections.Generic;

/// <summary>

/// Class contains CLR scalar functions for calculation of running totals

/// </summary>

public class RunningTotalsQueue

{

/// <summary>

/// Storage Structure for holding actual Total and row number for security check.

/// </summary>

/// <typeparam name="T">Totals Data Type</typeparam>

private class RtStorage<T> where T : struct

{

public T Total;

public int RowNo;

public Queue<T> Queue;

public RtStorage(int queueLength)

{

Queue = new Queue<T>(queueLength);

}

}

/// <summary>

/// Calculates a running totals on Int (Int32) data type

/// </summary>

/// <param name="val">Value of current row</param>

/// <param name="id">ID of the function in single query</param>

/// <param name="queueLength">Length of the queue. 0 for classical running totals</param>

/// <param name="rowNo">Specifies expected rowNo. It is for security check to ensure correctness of running totals</param>

/// <param name="nullValue">Value to be used for NULL values</param>

/// <param name="nullForLessRows">Specifies whether return NULL if less values than queue are summed</param>

/// <returns>SqlInt64 representing running total</returns>

[SqlFunction(IsDeterministic = true)]

public static SqlInt64 RunningTotalBigIntQueue(SqlInt64 val, SqlByte id, int queueLength, int rowNo, SqlInt64 nullValue, bool nullForLessRows)

{

string dataName = string.Format("MultiSqlRtQueue_{0}", id.IsNull ? 0 : id.Value);

object lastSum = CallContext.GetData(dataName);

RtStorage<SqlInt64> storage;

if (lastSum != null)

storage = (RtStorage<SqlInt64>)lastSum;

else

CallContext.SetData(dataName, storage = new RtStorage<SqlInt64>(queueLength));

storage.RowNo++;

if (storage.RowNo != rowNo)

throw new System.InvalidOperationException(string.Format("Rows were processed out of expected order. Expected RowNo: {0}, received RowNo: {1}", storage.RowNo, rowNo));

if (!val.IsNull)

storage.Total = storage.Total.IsNull ? val : storage.Total + val;

else

storage.Total = storage.Total.IsNull ? nullValue : (nullValue.IsNull ? storage.Total : storage.Total + nullValue);

var currentTotal = storage.Total;

if (queueLength > 0 && storage.Queue.Count == queueLength)

{

var lastQueue = storage.Queue.Dequeue();

currentTotal -= lastQueue.IsNull ? 0 : lastQueue;

}

else if (storage.Queue.Count < queueLength && nullForLessRows)

currentTotal = SqlInt64.Null;

if (queueLength > 0)

storage.Queue.Enqueue(storage.Total);

return currentTotal;

}

}

On first call the function allocates a queue of requested queue size inside a private storage class and stores it using the CallContext for use by future calls. The function also allows returning NULL when the count of rows processed is lower than the number of rows requested to be calculated in the running total and also allows calculation of classical running totals (not queued) in case the queue length is equal to 0.

CREATE FUNCTION [dbo].[fn_RunningTotalBigIntQueue]( @val [bigint], --value to be added to running total (a fiedl in the query) @id [tinyint], --id of the running total within a single query @queueLength [int], --lenght of the queue @rowNo [int], --RowNumber of processed records. This is compared to espected rowNo and in case out of synchronization an exceiption is fired @nullValue [bigint] = NULL, --representation of the NULL value when adding to running totals @nullForLesRows [bit] = 0 --specifies whether return NULL if less records than queueLenght were processed ) RETURNS [bigint] WITH EXECUTE AS CALLER AS EXTERNAL NAME [SqlClrTotals].[RunningTotalsQueue].[RunningTotalBigIntQueue] GO

Tests

Once we have compiled the sources, created assembly and function in DB, we can make a tests. First we will test the T-SQL solution from the article mentioned at the beginning of this blog post and then the presented CLR solution.

T-SQL solution

SET STATISTICS IO ON

GO

SELECT

Acc.ID,CONVERT(varchar(10),TransactionDate,101) AS TransactionDate ,

Balance,

isnull(RunningTotal,'') AS RunningTotal

FROM Accounts Acc

LEFT OUTER JOIN (SELECT ID,sum(Balance) AS RunningTotal

FROM (SELECT A.ID AS ID,B.ID AS BID, B.Balance

FROM Accounts A

cross JOIN Accounts B

WHERE B.ID BETWEEN A.ID-4

AND A.ID AND A.ID>4

) T

GROUP BY ID ) Bal

ON Acc.ID=Bal.ID

GO

SET STATISTICS IO OFF

GO

Results:

ID TransactionDate Balance RunningTotal ----------- --------------- ---------------------- ---------------------- 1 01/01/2000 100 0 2 01/02/2000 101 0 3 01/03/2000 102 0 4 01/04/2000 103 0 5 01/05/2000 104 510 6 01/06/2000 105 515 7 01/07/2000 106 520 8 01/08/2000 107 525 9 01/09/2000 108 530 10 01/10/2000 109 535 11 01/11/2000 200 630 12 01/12/2000 201 725 13 01/13/2000 202 820 14 01/14/2000 203 915 15 01/15/2000 204 1010 16 01/16/2000 205 1015 17 01/17/2000 206 1020 18 01/18/2000 207 1025 19 01/19/2000 208 1030 20 01/20/2000 209 1035 (20 row(s) affected) Table 'Accounts'. Scan count 37, logical reads 37, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

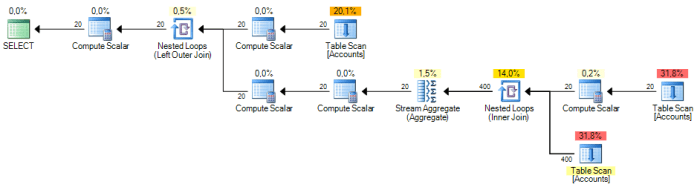

We cab see, that for only a few records and the T-SQL solution produced 37 logical reads and the query plan is quite complex. And there is a lot of table scans.

If we add a clustered primary key on the ID

ALTER TABLE dbo.Accounts ADD CONSTRAINT PK_Accounts PRIMARY KEY CLUSTERED(ID)

Then the plan will be better as we will replace the three table scans in the query plan by one clustered index scan and two clustered index seeks. But even now the plan is quite complex.

CLR Solution test

SET STATISTICS IO ON

GO

SELECT

Acc.ID

,CONVERT(varchar(10),TransactionDate,101) AS TransactionDate

,Balance

,ISNULL(dbo.fn_RunningTotalBigIntQueue(Balance, 0, 5, ROW_NUMBER() OVER(ORDER BY ID), NULL, 1), 0) AS RunningTotal

FROM Accounts Acc

ORDER BY ID

OPTION(MAXDOP 1)

GO

SET STATISTICS IO OFF

GO

Here we have results:

ID TransactionDate Balance RunningTotal ----------- --------------- ---------------------- ---------------------- 1 01/01/2000 100 0 2 01/02/2000 101 0 3 01/03/2000 102 0 4 01/04/2000 103 0 5 01/05/2000 104 510 6 01/06/2000 105 515 7 01/07/2000 106 520 8 01/08/2000 107 525 9 01/09/2000 108 530 10 01/10/2000 109 535 11 01/11/2000 200 630 12 01/12/2000 201 725 13 01/13/2000 202 820 14 01/14/2000 203 915 15 01/15/2000 204 1010 16 01/16/2000 205 1015 17 01/17/2000 206 1020 18 01/18/2000 207 1025 19 01/19/2000 208 1030 20 01/20/2000 209 1035 (20 row(s) affected) Table 'Accounts'. Scan count 1, logical reads 1, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

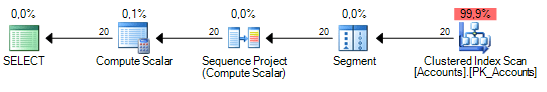

Here we can see, that the CLR solution produced exactly the same output as the T-SQL solution. What more, we have only 1 logical read as the table is small and we have also received a very simple execution plan with single Table Scan and Sort. The segment and Sequence Project(Compute Scalar) operators are the ROW_NUMBER() function for security check. The compute Scalar is our function computing the running totals.

In case of the Clustered Primary Key the plan will contain one Clustered Index scan without sort as the Clustered Key is already in our expected order.

Conclusion

As we can see from the simple test here, the CLR solution is unbeatable for such purposes. Even if we use other T-SQL solution like “quirky updates”, even then the CLR will have less reads and should be quicker.

When increasing the number of records and increasing the last X number, then with higher number count, the T-SQL solution will kill even high end servers. On the other side you will see nearly no difference in speed when using the CLR solution. As it only needs to allocate one queue of last X elements. Even if we want to count last 1000 000 rows, then it only needs to allocate such queue and in case of bigint it will 8 MB + some overhead of the queue.

Of course the CLR solution has the issues with processing rows in right order, but for that purposes there is the security check implemented so in case rows are processed in other than expected order an exception is thrown. But in most cases the scenario is very simple and you need to calculate the total from a simple table and in such cases this solution work without bigger problems. In case of complex queries with JOINS or other windowing functions, records can be processed into a temp table and then the running totals calculated on the temporary table.

Hi Pavel,

I was wondering if you have a version of this CLR were you reset the running total to 0 if say an account number was to change. I would want to pass through the account code to the function and if it is different to the previous row then reset the running total to 0. I do not have C# experience so am not confident to make these changes myself.

Laura

Hi,

This is quite simple. You add another parameter (The account Number) to the function. Then you include it in the

RTStoragestructure for storing the running total and check it whether it has changed. If so, simply reset the RunningTotal to 0.In case the Account Number will be of type int, it could look like:

private struct RtStorage where T : struct { public T Total; public int RowNo; public int LastAccountNo; //here we will store the last account number } [SqlFunction(IsDeterministic = true)] public static SqlMoney RunningTotalMoney(SqlMoney val, SqlByte id, int rowNo, SqlMoney nullValue, int AccountNumber) { string dataName = string.Format("MultiSqlRt_{0}", id.IsNull ? 0 : id.Value); object lastSum = CallContext.GetData(dataName); var storage = lastSum != null ? (RtStorage)lastSum : new RtStorage(); storage.RowNo++; if (storage.RowNo != rowNo) throw new System.InvalidOperationException(string.Format("Rows were processed out of expected order. Expected RowNo: {0}, received RowNo: {1}", storage.RowNo, rowNo)); if (storage.LastAccountNo != AccountNumber) { storage.Total = 0; storage.LastAccountNo = AccountNumber; } if (!val.IsNull) storage.Total = storage.Total.IsNull ? val : storage.Total + val; else storage.Total = storage.Total.IsNull ? nullValue : (nullValue.IsNull ? storage.Total : storage.Total + nullValue); CallContext.SetData(dataName, storage); return storage.Total; }Thanks Pavel for the reply! Much appreciated.

This works perfectly for the resetting the running total. However I am running into issues when I try to divide one running total by another. I was previosuly not having issues with this. I am getting the rows processed out of order error.

The use of nullif is not helping either! 🙂

I amended your account table to include an account number and populated with sample data

CREATE TABLE [dbo].[Accounts](

[ID] [int] IDENTITY(1,1) NOT NULL,

[AccountNumber] [int] NULL,

[TransactionDate] [datetime] NULL,

[Balance] [float] NULL

) ON [PRIMARY]

GO

--========================================

-- Test Query 1

--========================================

SET STATISTICS IO ON

SET STATISTICS TIME ON

GO

SELECT

ID,

Balance,

-- dbo.fn_RunningTotalfloat(Balance, 0, ROW_NUMBER() OVER(ORDER BY ID), null, AccountNumber) as RunningTotal,

dbo.fn_RunningTotalfloat(Balance, 0, ROW_NUMBER() OVER(ORDER BY ID), null, AccountNumber) / dbo.fn_RunningTotalfloat(Balance, 0, ROW_NUMBER() OVER(ORDER BY ID), null, AccountNumber)

FROM dbo.Accounts

ORDER BY ID

SET STATISTICS TIME OFF

SET STATISTICS IO OFF

GO

Any ideas.

Hi,

as you have mentioned, you receive an error, that the rows are being processed out of order.

It means, that the query plan has changed and do not process the rows in the order of ORDER BY Clause. This is a drawback of the CLR way. You can try to play a little with the query to get the appropriate plan. Anyway the plan can change with amount of rows processed and many other factors.

Currently I have no possibility to check this for you and eventually give you suggestion which will lead to plan which suits your scenario. I can check this next week.

Anyway if the query worked for you when without the division, as a workaround, you can query the data into a temp table without the division and do the division in subsequent query.