When you deploy a SSIS project to SSIS Catalog, you have the possibility to Configure the deployed SSIS project so you do not need to pass the parameter execution values each time you are executing the the project packages. You can configure the project to use explicitly provided value or you can use reference to Environment Variables.

This is very useful feature, however if you are using multiple environments, like DEV, TEST, QA, PROD, then you have to handle that configuration in each of the environments. To simplify the process of setting up the parameter, you can scrip the configuration.

The pitfall of scripting the configuration is, that you can do that automatically only during initial setup of the configuration. Once you confirm the configuration values in SSMS, there is no possibility to script-out any existing configuration.

sp_SSISCloneConfiguration

sp_SSISCloneConfiguration is a custom stored procedure which takes care about cloning/copying the configurations among different projects in the same SSISDB catalog as well as it provides functionality to generate reusable scripts to easily re-apply the configurations even among different SSIS Servers.

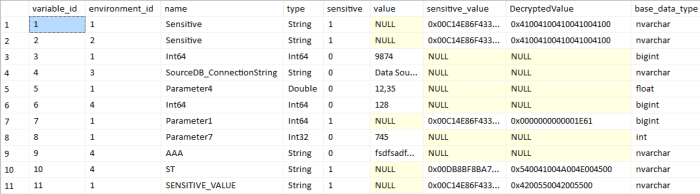

The stored procedure also takes care about sensitive values re-encryption when those are being cloned as SSISS is using different encryption keys for each project in the SSIS catalog, so simple copy of the encrypted values does not work among different projects.

The stored procedure executes in the context of the AllSchemaOwner database user in the SSISDB, which ensure access to all areas of SSISDB including the needed certificates and symmetric keys to allow scripting. The actual re-application of the configuration script is running in the context of the caller as the Internal SSIS catalog stored procedures for configuring projects needs to be executed in the context of windows credentials.

The create scripts grants EXECUTE permission to the sssis_admin database role in the SSISDB catalog so the SSSIS admins can utilize that stored procedure.

Be careful, anyone with EXECUTE permission on the stored procedure is capable of seeing decrypted sensitive values when printing the script. The script by default grants that permission to ssis_admin database role. If you want to keep that functionality only to sysadmins, remove the GRANT at the end of the script.

The complete source code for the sp_SSISCloneConfiguration is available in my SQL-Scripts project on GitHub in the SSISDB folder.

The syntax of the stored procedure is as follow:

sp_SSISCloneConfiguration

@sourceFolder = 'Source Folder Name'

,@sourceProject = 'Source Project Name'

,@sourceObject = 'Optional Source Object Name'

,@destinationFolder = 'Optional Destination Folder Name'

,@destinationProject = 'Optional Destination Project Name'

,@printScript = 1 --Specifies whether only script should be printed

,@decryptSensitiveInScript = 1 --Specifies whether sensitive values should be decrypted in script

When the procedure copies the configuration, it applies the source configurations to target. If on the target are any other configuration, those are not touched by the cloning script. In order to have exact configuration, the configurations on target project should be reset.

The generated script is also easily reusable as there are two variables in the beginning for specification of the destination folder and project. By simple modification of those variables the script will apply the configuration on different projects in different folders.

Parameters

The procedure has several parameters where some are required and some optional

The core parameters are @sourceFolder, @sourceProject . The @sourceObject, @destinationFolder, @destinationProject, @printScript and @decryptSensistiveInScript are optional.

@source Folder

Specifies the source folder where the source project is located

@sourceProject

Specifies the name of the source project from within source folder to be scripted

@sourceObject

Optional name of the Source Object. It specifies name of the object withing the project which configuration should be scripted. It can point to project name or to individual package names. When provided, then only configurations related to that object are cloned or scripted.

@destinationFolder

Name of the folder of destination project to which the configuration should be cloned. This parameter is optional and if not provided than the source folder name is being used.

@destinationProject

Name of the destination project to which the configuration should be coned. This parameter is optional and if not provided, then the name of the source project is used. If the destination is not provided or matches the source project (both folders and projects are the same), then the @printScript parameter is enforced to 1 and script is always generated.

@printScript

Specifies whether a script should be printed into the output window. If yes, then you simply copy the script from the output window and you can apply it immediatelly on other SSIS Instance.

Default value = 0, this means that the script executes within the SSISDB catalog and automatically clones the configuration unless it is enforced when source matches destination or destination is not provided.

@decryptSensitiveInScript

Specifeis whether sensitive values should be decrypted in the script.If yes, then the values are part of the script in plain-text form, however they are marked sensitive and will be encrypted after applying the script.

If the parameter is 0 (Default), then the sensitive values are not scripted out and instead NULL value is being scripted out. User must then fill those missing values prior execution of the script.

Sample Output

Below is sample script generated by the stored procedure when @printScript=1 and @decryptSensitiveInScript=1

RAISERROR(N'sp_SSISCloneConfiguration v0.10 (2016-12-18) (C) 2016 Pavel Pawlowski', 0, 0) WITH NOWAIT;

RAISERROR(N'=====================================================================', 0, 0) WITH NOWAIT;

DECLARE @destinationFolder nvarchar(128) = N'Test' --Specify Destination Folder Name

DECLARE @destinationProject nvarchar(128) = N'DataLoading' --Specify Destination Project Name

--Checking for destination folder existence

IF NOT EXISTS(SELECT 1 FROM [SSISDB].[catalog].[folders] WHERE [name] = @destinationFolder)

BEGIN

RAISERROR(N'Destination folder [%s] does not exists.', 15, 0, @destinationFolder) WITH NOWAIT;

RETURN;

END

--Checking for destination project existence

IF NOT EXISTS(SELECT 1

FROM [SSISDB].[catalog].[projects] p

INNER JOIN [SSISDB].[catalog].[folders] f ON f.folder_id = p.folder_id

WHERE f.name = @destinationFolder AND p.name = @destinationProject)

BEGIN

RAISERROR(N'Destination project [%s]\[%s] does not exists.', 15, 1, @destinationFolder, @destinationProject) WITH NOWAIT;

RETURN;

END

--Project parameters configuration

DECLARE @var sql_variant

RAISERROR(N'Creating Configuration [SSISDB]\[%s]\[%s]\[DataLoading]\[SourceDB_ConnectionString]', 0, 0, @destinationFolder, @destinationProject) WITH NOWAIT;

EXEC [SSISDB].[catalog].[set_object_parameter_value] @object_type=20, @parameter_name = N'SourceDB_ConnectionString', @object_name = @destinationProject, @folder_name = @destinationFolder, @project_name = @destinationProject, @value_type = 'R', @parameter_value = N'SourceDB_ConnectionString'

RAISERROR(N'Creating Configuration [SSISDB]\[%s]\[%s]\[DataLoading]\[Interval]', 0, 0, @destinationFolder, @destinationProject) WITH NOWAIT;

SET @var = CONVERT(int, N'120');

EXEC [SSISDB].[catalog].[set_object_parameter_value] @object_type=20, @parameter_name = N'Interval', @object_name = @destinationProject, @folder_name = @destinationFolder, @project_name = @destinationProject, @value_type = 'V', @parameter_value = @var

RAISERROR(N'Creating Configuration [SSISDB]\[%s]\[%s]\[DataLoading]\[SecurePassword]', 0, 0, @destinationFolder, @destinationProject) WITH NOWAIT;

SET @var = CONVERT(sql_variant, N'Pa$$w0rd'); --SENSITIVE

EXEC [SSISDB].[catalog].[set_object_parameter_value] @object_type=20, @parameter_name = N'SecurePassword', @object_name = @destinationProject, @folder_name = @destinationFolder, @project_name = @destinationProject, @value_type = 'V', @parameter_value = @var

RAISERROR(N'Creating Configuration [SSISDB]\[%s]\[%s]\[DataLoad.dtsx]\[SourceTableName]', 0, 0, @destinationFolder, @destinationProject) WITH NOWAIT;

SET @var = CONVERT(sql_variant, N'Person');

EXEC [SSISDB].[catalog].[set_object_parameter_value] @object_type=30, @parameter_name = N'SourceTableName', @object_name = N'DataLoad.dtsx', @folder_name = @destinationFolder, @project_name = @destinationProject, @value_type = 'V', @parameter_value = @var

RAISERROR(N'-----------------------------------------------------------------------------', 0, 0) WITH NOWAIT;

RAISERROR(N'There are configurations using Environment varaibles references.', 0, 0) WITH NOWAIT;

RAISERROR(N'DON''T FORGET TO SET ENVIRONMENT REFERENCES for project [%s]\[%s].', 0, 0, @destinationFolder, @destinationProject) WITH NOWAIT

RAISERROR(N'-----------------------------------------------------------------------------', 0, 0) WITH NOWAIT;