This blog post is inspired by forum thread on SQL Server Central Convert CSV values in three columns to rows.

On many sites there are comparison of string splitting functions using pure T-SQL. But not much of them also mention the CLR way of doing it.

On small strings with a few elements the T-SQL solution is unbeatable in speed. But when the number of elements in the string increase, the situation is completely different.

In this comparison I will use one of the quickest T-SQL solution vs. a CLR solution using regular expressions.

For the fastest CLR solution, check my blog post: Fastest CSV strings splitting using CLR (T-SQL vs. CLR revisited).

Probably the quickest known T-SQL solution is function mentioned by Jeff Moden in the forum thread http://www.sqlservercentral.com/Forums/FindPost944589.aspx.

CREATE FUNCTION [dbo].[DelimitedSplit8K]

--/***************************************************************************************************

-- Purpose:

-- Split a given string at a given delimiter and return a list of the split elements (items).

-- Returns:

-- iTVF containing the following:

-- ItemNumber = Element position of Item as a BIGINT (not converted to INT to eliminate a CAST)

-- Item = Element value as a VARCHAR(8000)

-- CROSS APPLY Usage Example:

-----------------------------------------------------------------------------------------------------

----===== Conditionally drop the test tables to make reruns easier for testing.

-- -- (this is NOT a part of the solution)

-- IF OBJECT_ID('tempdb..#JBMTest') IS NOT NULL

-- DROP TABLE #JBMTest

--;

----===== Create and populate a test table on the fly (this is NOT a part of the solution).

-- SELECT *

-- INTO #JBMTest

-- FROM (

-- SELECT 1,'1,10,100,1000,10000,100000,1000000' UNION ALL

-- SELECT 2,'2000000,200000,20000,2000,200,20,2' UNION ALL

-- SELECT 3, 'This,is,a,test' UNION ALL

-- SELECT 4, 'and so is this' UNION ALL

-- SELECT 5, 'This, too (no pun intended)'

-- ) d (SomeID,SomeValue)

--;

--GO

----===== Split the CSV column for the whole table using CROSS APPLY (this is the solution)

-- SELECT test.SomeID, split.ItemNumber, split.Item

-- FROM #JBMTest test

-- CROSS APPLY

-- (

-- SELECT ItemNumber, Item

-- FROM dbo.DelimitedSplit8k(test.SomeValue,',')

-- ) split

--;

-----------------------------------------------------------------------------------------------------

-- Notes:

-- 1. Optimized for VARCHAR(7999) or less. No testing or error reporting for truncation at 7999

-- characters is done.

-- 2. Optimized for single character delimiter. Multi-character delimiters should be resolved

-- externally from this function.

-- 3. Optimized for use with CROSS APPLY.

-- 4. Does not "trim" elements just in case leading or trailing blanks are intended.

-- 5. If you don't know how a Tally table can be used to replace loops, please see the following...

--http://www.sqlservercentral.com/articles/T-SQL/62867/

-- 6. Changing this function to use VARCHAR(MAX) will cause it to run twice as slow. It's just the

-- nature of VARCHAR(MAX) whether it fits in-row or not.

-- 7. Multi-machine testing for the method of using UNPIVOT instead of 10 SELECT/UNION ALLs shows

-- that the UNPIVOT method is quite machine dependent and can slow things down quite a bit.

-- 8. Performance testing shows using "TOP" for the limiting criteria of "N" is actually

-- slower and slightly more CPU intensive than the traditional WHERE N < LEN(@pString) + 2.

-- 9. Performance testing shows using ORDER BY (SELECT x) where "x" is anything is actually

-- slower and slightly more CPU intensive than the traditional ORDER BY (SELECT N).

-- Credits:

-- This code is the product of many people's efforts including but not limited to the following:

-- cteTally concept originally by Iztek Ben Gan and "decimalized" by Lynn Pettis (and others) for a

-- bit of extra speed and finally redacted by Jeff Moden for a different slant on readability and

-- compactness. Hat's off to Paul White for his simple explanations of CROSS APPLY. Finally,

-- special thanks to Erland Sommarskog for his tireless efforts to help people understand

-- what you can actually do with T-SQL. I also thank whoever wrote the first article I ever saw

-- on "numbers tables" which is located at the following URL ...

--http://sqlserver2000.databases.aspfaq.com/why-should-i-consider-using-an-auxiliary-numbers-table.html

-- Revision History:

-- Rev 00 - 20 Jan 2010 - Concept: Lynn Pettis and others.

-- Redaction/Implementation: Jeff Moden

-- - Base 10 redaction and reduction for CTE. (Total rewrite)

-- Rev 01 - 13 Mar 2010 - Jeff Moden

-- - Removed one additional concatenation and one subtraction from the SUBSTRING in the

-- SELECT List for that tiny bit of extra speed.

-- Rev 02 - 14 Apr 2010 - Jeff Moden

-- - No code changes. Added CROSS APPLY usage example to the header, some additional credits,

-- and extra documentation.

-- Rev 03 - 18 Apr 2010 - Jeff Moden

-- - No code changes. Added notes 7, 8, and 9 about certain "optimizations" that dont'

-- actually work for this type of function.

-- Rev 04 - 29 Jun 2010 - Jeff Moden

-- - Added WITH SCHEMABINDING thanks to a note by Paul White. This prevents an unnecessary

-- "Table Spool" when the function is used in an UPDATE statement even though the function

-- makes no external references.

--***************************************************************************************************/

--===== Define I/O parameters

(

@pString VARCHAR(7999),

@pDelimiter CHAR(1)

)

RETURNS TABLE

WITH SCHEMABINDING

AS

RETURN

--===== "Inline" CTE Driven "Tally Table” produces values up to

-- 10,000... enough to cover VARCHAR(8000)

WITH

E1(N) AS ( --=== Create Ten 1's

SELECT 1 UNION ALL SELECT 1 UNION ALL

SELECT 1 UNION ALL SELECT 1 UNION ALL

SELECT 1 UNION ALL SELECT 1 UNION ALL

SELECT 1 UNION ALL SELECT 1 UNION ALL

SELECT 1 UNION ALL SELECT 1 --10

),

E2(N) AS (SELECT 1 FROM E1 a, E1 b), --100

E4(N) AS (SELECT 1 FROM E2 a, E2 b), --10,000

cteTally(N) AS (SELECT ROW_NUMBER() OVER (ORDER BY (SELECT N)) FROM E4)

--===== Do the split

SELECT ROW_NUMBER() OVER (ORDER BY N) AS ItemNumber,

SUBSTRING(@pString, N, CHARINDEX(@pDelimiter, @pString + @pDelimiter, N) - N) AS Item

FROM cteTally

WHERE N < LEN(@pString) + 2

AND SUBSTRING(@pDelimiter + @pString, N, 1) = @pDelimiter

;

GO

For the CLR we will use the RegEx function I mentioned in one of my previous blog post: SQL Server 2005 and SQL Server 2008 Regular Expressions and pattern matching.

To make the comparison more interesting I will use the table structure as Jeff Moden has used in his post http://www.sqlservercentral.com/Forums/FindPost988659.aspx. You can also took on the comparison of different T-SQL solutions made by Jeff in the mentioned thread.

Test data preparation

We will build 3 test data table. One for string with 16 element (CsvTest), one for 100 element (CSVTest3) and one for 1333 elements (CSVTest2). The 1333 elements is near the varchar(8000) for which the T-SQL version of DelimitedSplit8K is optimized.

WITH TallyBase AS (

SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL

SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N

),

Tally AS (

SELECT

CAST(ROW_NUMBER() OVER(ORDER BY (SELECT NULL)) AS INT) as N

FROM TallyBase t1, TallyBase t2, TallyBase T3, TallyBase T4

)

SELECT TOP (10000) --Controls the number of rows in the test table

ISNULL(ROW_NUMBER() OVER (ORDER BY(SELECT NULL)),0) AS RowNum,

(

SELECT CAST(STUFF( --=== STUFF get`s rid of the leading comma

( --=== This builds CSV row with a leading comma

SELECT TOP (16) --Controls the number of CSV elements in each row

',' + CAST(ABS(CHECKSUM(NEWID()))%100000 AS VARCHAR(10))

FROM Tally t3 --Classic cross join pseudo-cursor

CROSS JOIN Tally t4 --can produce row sets up 121 million.

WHERE t1.N <> t3.N --Without this line, all rows would be the same

FOR XML PATH('')

)

,1,1,'') AS NVARCHAR(MAX))

) AS CsvParameter

INTO CsvTest

FROM Tally t1 --Classic cross join pseudo-cursor

CROSS JOIN Tally t2; --can produce row sets up 121 million.

GO

ALTER TABLE dbo.CsvTest ADD PRIMARY KEY (RowNum) WITH FILLFACTOR=100

GO

WITH TallyBase AS (

SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL

SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N

),

Tally AS (

SELECT

CAST(ROW_NUMBER() OVER(ORDER BY (SELECT NULL)) AS INT) as N

FROM TallyBase t1, TallyBase t2, TallyBase T3, TallyBase T4

)

SELECT TOP (10000) --Controls the number of rows in the test table

ISNULL(ROW_NUMBER() OVER (ORDER BY(SELECT NULL)),0) AS RowNum,

(

SELECT CAST(STUFF( --=== STUFF get's rid of the leading comma

( --=== This builds CSV row with a leading comma

SELECT TOP (100) --Controls the number of CSV elements in each row

','+CAST(ABS(CHECKSUM(NEWID()))%100000 AS VARCHAR(10))

FROM Tally t3 --Classic cross join pseudo-cursor

CROSS JOIN Tally t4 --can produce row sets up 121 million.

WHERE t1.N <> t3.N --Without this line, all rows would be the same

FOR XML PATH('')

)

,1,1,'') AS NVARCHAR(MAX))

) AS CsvParameter

INTO CsvTest3

FROM Tally t1 --Classic cross join pseudo-cursor

CROSS JOIN Tally t2; --can produce row sets up 121 million.

GO

ALTER TABLE dbo.CsvTest3 ADD PRIMARY KEY (RowNum) WITH FILLFACTOR=100

GO

WITH TallyBase AS (

SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL

SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N

),

Tally AS (

SELECT

CAST(ROW_NUMBER() OVER(ORDER BY (SELECT NULL)) AS INT) as N

FROM TallyBase t1, TallyBase t2, TallyBase T3, TallyBase T4

)

SELECT TOP (10000) --Controls the number of rows in the test table

ISNULL(ROW_NUMBER() OVER (ORDER BY(SELECT NULL)),0) AS RowNum,

(

SELECT CAST(STUFF( --=== STUFF get's rid of the leading comma

( --=== This builds CSV row with a leading comma

SELECT TOP (1333) --Controls the number of CSV elements in each row

','+CAST(ABS(CHECKSUM(NEWID()))%100000 AS VARCHAR(10))

FROM Tally t3 --Classic cross join pseudo-cursor

CROSS JOIN Tally t4 --can produce row sets up 121 million.

WHERE t1.N <> t3.N --Without this line, all rows would be the same

FOR XML PATH('')

)

,1,1,'') AS NVARCHAR(MAX))

) AS CsvParameter

INTO CsvTest2

FROM Tally t1 --Classic cross join pseudo-cursor

CROSS JOIN Tally t2; --can produce row sets up 121 million.

GO

ALTER TABLE dbo.CsvTest2 ADD PRIMARY KEY (RowNum) WITH FILLFACTOR=100

GO

Speed testing and comparison

The speed comparison Tests will be done using the below queries

--T-SQL version

DBCC DROPCLEANBUFFERS

GO

DBCC FREEPROCCACHE

GO

SET STATISTICS IO ON

SET STATISTICS TIME ON

GO

--=== T-SQL Version ===

DECLARE @RowNum INT, @ItemNumber INT, @ItemValue INT;

SELECT

@RowNum = D.RowNum,

@ItemNumber = V.ItemNumber,

@ItemValue = V.Item

FROM dbo.CsvTest D

CROSS APPLY dbo.DelimitedSplit8K(D.CsvParameter, ',') V

GO

SET STATISTICS TIME OFF

SET STATISTICS IO OFF

GO

--CLR Version

DBCC DROPCLEANBUFFERS

GO

DBCC FREEPROCCACHE

GO

SET STATISTICS IO ON

SET STATISTICS TIME ON

GO

--=== CLR-RegEx Version ===

DECLARE @RowNum INT, @ItemNumber INT, @ItemValue INT;

SELECT

@RowNum = D.RowNum,

@ItemNumber = V.matchId,

@ItemValue = CAST(V.value as int)

FROM dbo.CsvTest D

CROSS APPLY dbo.fn_RegExMatches(D.CsvParameter, '(d+?)(?:,|$)') V

WHERE V.groupId = 1 --Return only GroupID=1 as this group contains the value GroupID=0 contains whole RegExMatch

GO

SET STATISTICS TIME OFF

SET STATISTICS IO OFF

GO

In the CLR RegEx version is used following regular expression : ‘(d+?)(?:,|$)’. It could be also possible to use ‘d+’ in this situation.

Results for 16 elements in string

--=== T-SQL Version ===

SQL Server parse and compile time:

CPU time = 15 ms, elapsed time = 26 ms.

Table 'CsvTest'. Scan count 1, logical reads 262, physical reads 2, read-ahead reads 281, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

SQL Server Execution Times:

CPU time = 1841 ms, elapsed time = 1922 ms.

SQL Server parse and compile time:

CPU time = 0 ms, elapsed time = 1 ms.

--=== CLR-RegEx Version ===

SQL Server parse and compile time:

CPU time = 16 ms, elapsed time = 22 ms.

Table 'CsvTest'. Scan count 5, logical reads 781, physical reads 5, read-ahead reads 281, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

Table 'Worktable'. Scan count 0, logical reads 0, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

SQL Server Execution Times:

CPU time = 77173 ms, elapsed time = 25480 ms.

SQL Server parse and compile time:

CPU time = 0 ms, elapsed time = 0 ms.

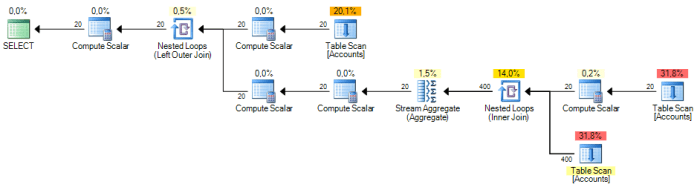

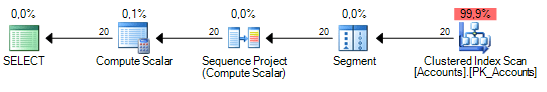

and SQL Profiler output:

Here we can see, that the T-SQL version is unbeatable. The T-SQL took about 2 seconds, while the CLR-RegEx took more than 25 seconds. We can also notice, that the T-SQL version had nearly 3 times less reads.

Results for 100 elements in string

--=== T-SQL Version ===

SQL Server parse and compile time:

CPU time = 0 ms, elapsed time = 27 ms.

Table 'CsvTest3'. Scan count 1, logical reads 1675, physical reads 4, read-ahead reads 1677, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

SQL Server Execution Times:

CPU time = 19890 ms, elapsed time = 20019 ms.

SQL Server parse and compile time:

CPU time = 0 ms, elapsed time = 0 ms.

--=== CLR-RegEx Version ===

SQL Server parse and compile time:

CPU time = 0 ms, elapsed time = 18 ms.

Table 'CsvTest3'. Scan count 5, logical reads 1836, physical reads 5, read-ahead reads 1677, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

Table 'Worktable'. Scan count 0, logical reads 0, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

SQL Server Execution Times:

CPU time = 79639 ms, elapsed time = 26602 ms.

SQL Server parse and compile time:

CPU time = 0 ms, elapsed time = 1 ms.

and SQL Profiler output:

Here we can see that the duration for T-SQL version rapidly increased to 20 seconds, while the duration of CRL-RegEx is nearly the same as for 16 elements in string not a whole 26 and half seconds. Also Reds are nearly the same.

Results for 1333 elements in string

--=== T-SQL Version ===

SQL Server parse and compile time:

CPU time = 0 ms, elapsed time = 25 ms.

Table 'CsvTest2'. Scan count 1, logical reads 74, physical reads 2, read-ahead reads 72, lob logical reads 367392280, lob physical reads 2500, lob read-ahead reads 0.

SQL Server Execution Times:

CPU time = 2745696 ms, elapsed time = 2758753 ms.

SQL Server parse and compile time:

CPU time = 0 ms, elapsed time = 0 ms.

--=== CLR-RegEx Version ===

SQL Server parse and compile time:

CPU time = 0 ms, elapsed time = 43 ms.

Table 'CsvTest2'. Scan count 5, logical reads 217, physical reads 5, read-ahead reads 72, lob logical reads 320860, lob physical reads 2494, lob read-ahead reads 170000.

Table 'Worktable'. Scan count 0, logical reads 0, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

SQL Server Execution Times:

CPU time = 170228 ms, elapsed time = 59624 ms.

SQL Server parse and compile time:

CPU time = 0 ms, elapsed time = 0 ms.

And SQL Profiler output:

From this results we can see, that when the length of the string increased and number of elements increased to 1333, the T-SQL version is nearly unusable and the CLR-RegEx is unbeatable by the T-SQL. Here the T-SQL solution took nearly 46 minutes while the CLR-RegEx took only 1 minute. Also the T-SQL solution now has 1 144 times more reads.

CLR-RegEx Testing of very long strings with hi elements count

Here are some test of the CLR-RegEx solution on some very large strings. This test splits single very long string using the CLR-RegEx. The number of elements in the CSV controls the TOP clause in below testing query. We will use it for several scenarios.

DECLARE @csv varchar(max);

DECLARE @RowNum INT, @ItemNumber INT, @ItemValue INT;

WITH TallyBase AS (

SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL

SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N UNION ALL SELECT 1 N

),

Tally AS (

SELECT

CAST(ROW_NUMBER() OVER(ORDER BY (SELECT NULL)) AS INT) as N

FROM TallyBase t1, TallyBase t2, TallyBase T3, TallyBase T4

)

SELECT @csv = CAST(STUFF( --=== STUFF get`s rid of the leading comma

( --=== This builds CSV row with a leading comma

SELECT TOP (100000) --Controls the number of CSV elements in each row

',' + CAST(ABS(CHECKSUM(NEWID()))%100000 AS VARCHAR(10))

FROM Tally t3 --Classic cross join pseudo-cursor

CROSS JOIN Tally t4 --can produce row sets up 121 million.

FOR XML PATH('')

)

,1,1,'') AS NVARCHAR(MAX))

SET STATISTICS TIME ON

SELECT

@ItemNumber = V.matchId,

@ItemValue = CAST(V.value as int)

FROM dbo.fn_RegExMatches(@csv, '(d+?)(?:,|$)') V

WHERE V.groupId = 1 --Return only GroupID=1 as this group contains the value GroupID=0 contains whole RegExMatch

GO

SET STATISTICS TIME OFF

100 000 elements

SQL Server Execution Times:

CPU time = 484 ms, elapsed time = 496 ms.

SQL Server parse and compile time:

CPU time = 0 ms, elapsed time = 0 ms.

1 000 000 elements

SQL Server Execution Times:

CPU time = 4352 ms, elapsed time = 20713 ms.

SQL Server parse and compile time:

CPU time = 0 ms, elapsed time = 0 ms.

10 000 000 elements

SQL Server Execution Times:

CPU time = 44320 ms, elapsed time = 88735 ms.

SQL Server parse and compile time:

CPU time = 0 ms, elapsed time = 0 ms.

Conclusion

From the test and measuring done in this blog post we can find, that when we need to split relatively small strings with small elements count (<100 elements) then the T-SQL Solution is the quickest possible and unbeatable.

When we have a moderate count of elements in the string (about 100) then it does not matter if we use the T-SQL or CLR-RegEx solution.

Once the count of elements in the string increases (more than 100) then the CLR-RegEx starts being unbeatable and with high counts of elements (more than 1000, or even millions of elements) then the T-SQL solution is nearly unusable as we can see the results of 10 000 rows with 1333 elements each – the T-SQL version took 46 minutes, while the CLR-RegEx took only 1 minute.

Also during all the tests, there was no occurrence of AppDomain Restart/(Unload/Load) when manipulating large strings using the CLR-RegEx solution. On some blogs I have found, that there was some AppDomain unloading and loading when using CLR but with combination of String.Split method. The RegEx solution doesn’t have such problems.